8 local LLM settings most people never touch that fixed my worst AI problems

Summary

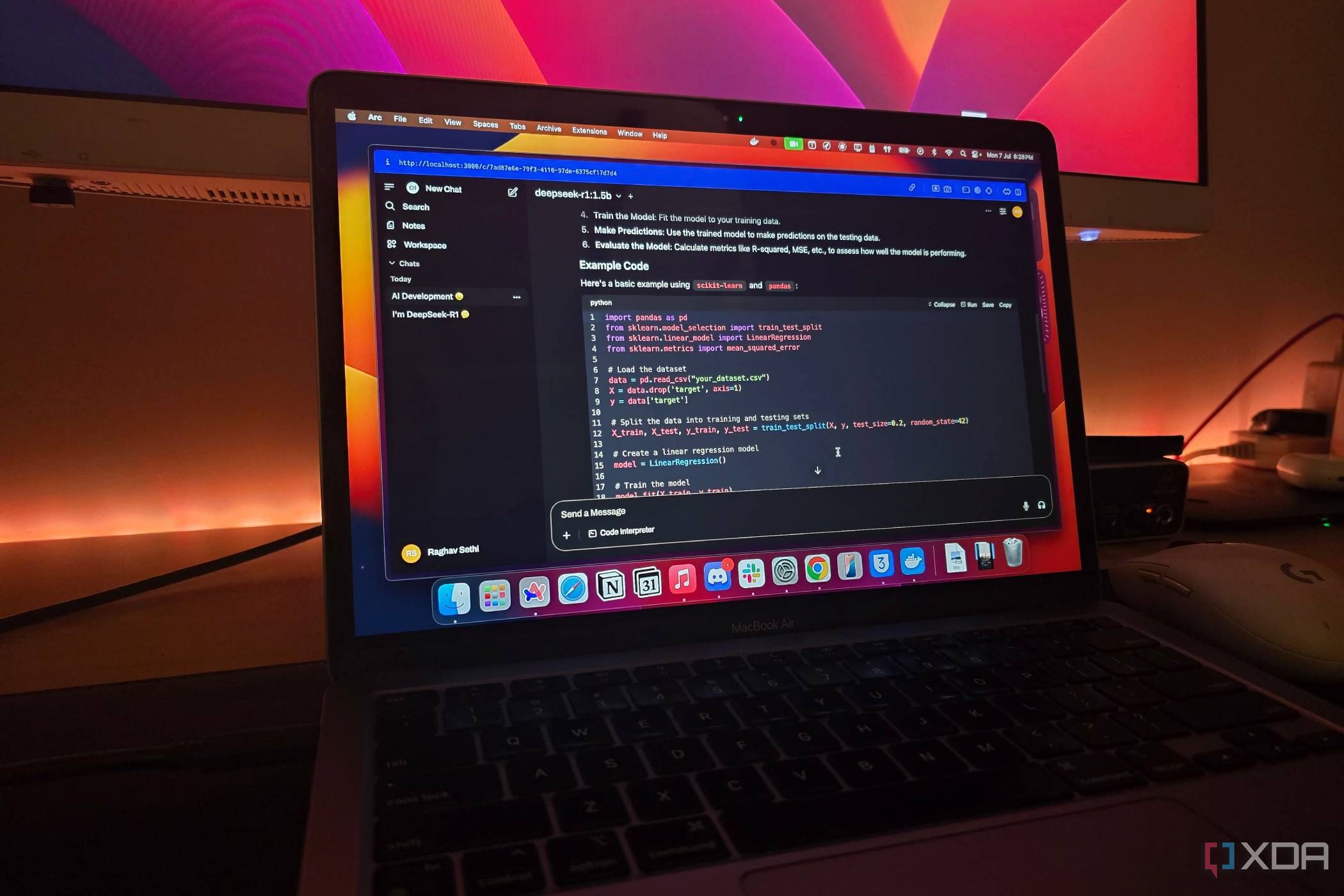

Running a local LLM can be simple, but users often encounter issues like repetitive responses and lost context. The publication highlights that optimizing settings can significantly enhance the overall experience, transforming frustration into satisfaction.

Key Insights

What is quantization and why should I use it for running local LLMs?

Quantization is a technique that reduces the precision of a model's parameters from high-precision floating-point numbers (like FP32) to lower-precision formats (like INT8). This transformation decreases the model's memory footprint and computational requirements without significantly compromising accuracy. For users with limited hardware, quantization allows you to run larger or more capable models locally by reducing their size, making it an essential optimization technique for improving performance on average-performance laptops and resource-constrained systems.

What are batch size and sequence length settings, and how do they affect my local LLM performance?

Batch size and sequence length are configuration parameters that directly affect latency and RAM usage when running local LLMs. Batch size determines how many inputs the model processes simultaneously, while sequence length controls the maximum length of text the model can handle at once. Adjusting these settings allows you to optimize the balance between performance speed and memory consumption based on your hardware capabilities. Fine-tuning these parameters through benchmarking and testing different configurations helps identify the optimal settings for your specific system and use case.