SK hynix and SanDisk announce new High Bandwidth Flash — speedy HBF standard is targeted at inference AI servers

Summary

SK hynix and SanDisk have unveiled a new high-speed HBF flash chip standard, promising enhanced performance and efficiency in data storage solutions. This innovation is set to revolutionize the industry, offering faster data access and improved reliability for consumers.

Key Insights

What is High Bandwidth Flash (HBF) and how does it differ from traditional storage solutions?

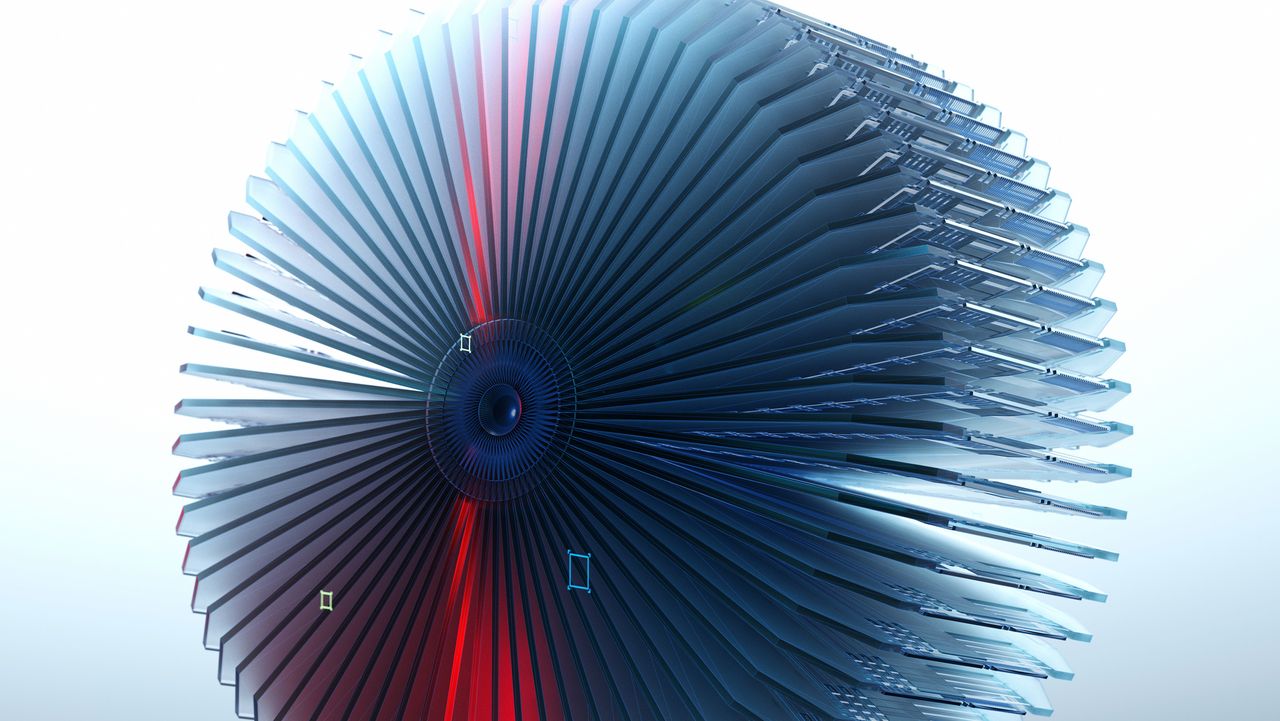

High Bandwidth Flash (HBF) is a NAND flash architecture that combines multiple stacked 3D NAND dies with advanced signaling technology to deliver both high capacity and high-speed data access. Unlike traditional SSDs that prioritize either speed or capacity, HBF achieves up to 800 GB/s aggregate bandwidth while supporting terabyte-scale capacity. It accomplishes this through die stacking, Through-Silicon Vias (TSVs) that connect dies vertically, and DDR synchronous signaling. The key innovation is that HBF delivers performance within 2.2% of high-end HBM (High Bandwidth Memory) DRAM while offering significantly greater capacity at a lower cost per bit, making it particularly suited for AI inference workloads where both speed and large data storage are critical.

Why is HBF specifically designed for AI inference servers rather than general consumer use?

HBF is purpose-built for AI inference servers because these systems face a critical 'memory wall' challenge: they require both massive data storage capacity and extremely fast data access speeds simultaneously. AI inference workloads need to store large language models and process user queries at scale, requiring the system to quickly retrieve and serve inference results. Traditional HBM DRAM is fast but expensive and limited in capacity, while conventional SSDs offer large capacity but are too slow. HBF bridges this gap by providing a supporting layer between HBM and traditional storage that offers high bandwidth performance comparable to HBM while delivering terabyte-scale capacity at lower cost. The technology is expected to see significant demand around 2030 as inference output storage needs grow exponentially.